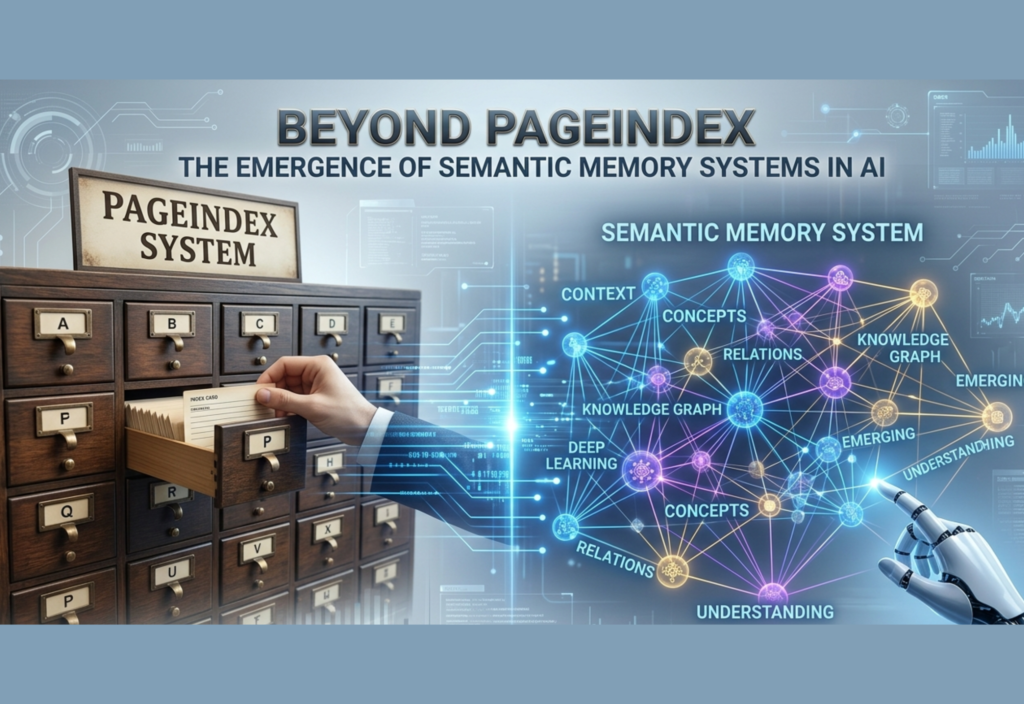

Artificial intelligence is entering a transformative era. For decades, the central challenge in AI development has been how to efficiently retrieve and process information. From early keyword-based search engines to modern vector databases and innovations like PageIndex, each step has brought improvements in speed, relevance, and scalability. Yet, despite these advancements, one fundamental limitation persists: AI systems still largely retrieve information rather than truly understand and remember it.

A new paradigm is now emerging—Semantic Memory Systems in AI. This shift represents more than just an incremental improvement. It signals a deep architectural evolution where AI moves from being a reactive tool to a system capable of building structured knowledge, maintaining context over time, and reasoning in a more human-like way.

The Evolution of AI Retrieval Systems

To understand the importance of semantic memory, it is essential to trace how AI retrieval systems have evolved.

1. Keyword-Based Search

Early systems relied on exact keyword matching. While simple and fast, they struggled with ambiguity, synonyms, and context. A search query often produced irrelevant results unless carefully phrased.

2. Vector Databases and Embeddings

The introduction of embeddings transformed retrieval. Instead of matching words, systems began matching meanings by converting text into numerical vectors. This enabled semantic similarity search, making results more relevant even when exact keywords were absent.

However, vector systems still have limitations:

- They lack persistent memory

- They depend heavily on external databases

- They struggle with multi-step reasoning

- Context is often limited to a single query

3. Structured Indexing (PageIndex and Similar Systems)

Newer approaches like PageIndex improved how information is organized and accessed. By structuring data more intelligently, these systems reduce noise and improve retrieval precision.

But even here, the core model remains unchanged:

Search → Retrieve → Generate → Forget

This cycle highlights the absence of long-term knowledge retention.

The Concept of Semantic Memory

In human cognition, memory is typically divided into different types. One of the most important is semantic memory—our ability to store general knowledge about the world, such as facts, meanings, and relationships between concepts.

For example:

- Knowing that water boils at 100°C

- Understanding that a cat is a type of animal

- Recognizing the relationship between cause and effect

Semantic memory is not tied to a specific event; it is structured knowledge that persists over time.

Applying this concept to AI means creating systems that:

- Store knowledge in a structured, interconnected form

- Continuously refine understanding

- Recall information contextually

- Build upon previous knowledge without starting from scratch

From Retrieval to Understanding

The shift from PageIndex to semantic memory systems represents a fundamental change in how AI operates.

Traditional Retrieval Approach

- Data is stored externally

- AI retrieves relevant chunks when needed

- No persistent understanding is formed

- Each interaction is largely independent

Semantic Memory Approach

- Knowledge is stored internally in structured formats

- Relationships between concepts are maintained

- AI can reason across multiple domains

- Learning is continuous and accumulative

This transformation is similar to the difference between:

- A library that fetches books on demand

- A mind that understands and connects ideas

Core Components of Semantic Memory Systems

Several technological advancements are converging to enable semantic memory in AI:

1. Knowledge Graphs

Knowledge graphs represent information as nodes (entities) and edges (relationships). This allows AI to understand how different concepts are connected.

For instance:

“Paris → Capital of → France”

This simple relationship enables deeper reasoning compared to isolated data points.

2. Memory-Augmented Architectures

These systems integrate neural networks with external or internal memory modules. Unlike traditional models, they can store intermediate knowledge and reuse it later.

This allows AI to:

- Remember previous interactions

- Build layered understanding

- Improve performance over time

3. Continual Learning

Traditional AI models require retraining to learn new information. Continual learning systems update knowledge incrementally without forgetting previous data (a problem known as catastrophic forgetting).

This is critical for building long-term memory.

4. Contextual and Relational Embeddings

Modern embedding techniques go beyond similarity matching. They encode relationships, hierarchies, and contextual dependencies, making it easier for AI to build structured knowledge.

Advantages of Semantic Memory Systems

The benefits of this new paradigm are significant:

1. Persistent Knowledge

AI systems no longer need to “start fresh” with every query. They retain and build upon prior knowledge.

2. Improved Reasoning

By understanding relationships, AI can perform deeper analysis and generate more meaningful insights.

3. Context Awareness

Semantic memory allows AI to maintain context across long conversations and complex tasks.

4. Efficiency Gains

Reducing reliance on repeated retrieval lowers computational costs and latency.

5. Personalization

AI can develop user-specific knowledge, enabling more tailored and relevant interactions.

Real-World Applications

Semantic memory systems are poised to reshape multiple industries:

Healthcare

AI can build long-term patient profiles, track treatment effectiveness, and support more accurate diagnoses.

Education

Learning platforms can adapt to individual students by remembering progress, learning styles, and knowledge gaps.

Enterprise Systems

Organizations can deploy AI that understands internal workflows, documents, and processes, leading to better decision-making.

Autonomous Agents

AI agents become more capable of handling complex, multi-step tasks by maintaining context and learning from past interactions.

Challenges in Building Semantic Memory

Despite its promise, this approach introduces new challenges:

Scalability

Managing large, interconnected knowledge systems is computationally demanding.

Data Accuracy

Incorrect information stored in memory can propagate errors over time.

Bias and Fairness

Persistent memory systems risk reinforcing biases if not carefully managed.

Privacy and Security

Storing long-term knowledge raises concerns about sensitive data handling.

Interpretability

As systems become more complex, understanding how decisions are made becomes harder.

Hybrid Future: Retrieval + Memory

The future of AI is unlikely to rely solely on semantic memory or retrieval systems. Instead, a hybrid approach is emerging:

- Retrieval systems provide access to vast, up-to-date information

- Semantic memory systems provide structured, persistent knowledge

Together, they create AI systems that are both informed and intelligent.

The Bigger Shift: From Tools to Cognitive Systems

The rise of semantic memory signals a broader transformation in AI:

From:

- Stateless systems

- Query-response models

- Data retrieval engines

To:

- Stateful systems

- Knowledge-driven reasoning

- Cognitive architectures

This shift moves AI closer to functioning as a true thinking system rather than a reactive tool.

Future Outlook

Looking ahead, semantic memory systems could enable:

- AI that continuously learns throughout its lifecycle

- Systems that develop domain expertise over time

- More natural and human-like interactions

- Advanced autonomous decision-making

As research progresses, we may see AI systems that not only assist humans but collaborate with them as intelligent partners.

Conclusion

Beyond PageIndex lies a new frontier in artificial intelligence—one defined not by how efficiently machines retrieve information, but by how effectively they understand, organize, and remember it.

Semantic memory systems represent a crucial step toward this future. By enabling AI to build structured knowledge and maintain long-term context, they transform machines from passive responders into active learners.

The journey from retrieval to memory is not just a technical upgrade—it is a fundamental reimagining of what AI can become.

In this new era, the most powerful AI systems will not be those that know how to search the fastest, but those that know how to think, connect, and remember.