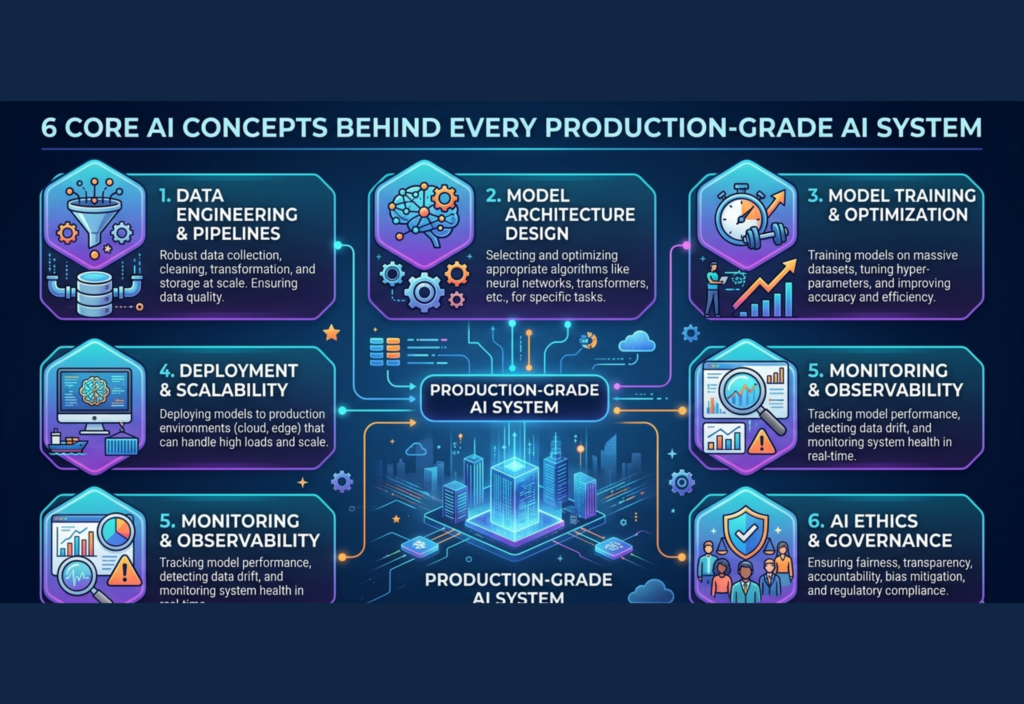

Artificial intelligence has rapidly become a core part of modern digital systems, powering everything from search engines and recommendation platforms to fraud detection and intelligent automation tools. As AI continues to evolve, businesses are no longer focused only on building models—they are focused on building reliable, scalable, and real-world ready systems. Production-Grade AI Systems rely on 6 core AI concepts to work effectively at scale, ensuring that models are not just accurate in testing environments but also stable, efficient, and adaptable in real-world conditions. Understanding these foundational concepts is essential for anyone working with AI, as they define how data is processed, how models are trained, how systems are deployed, and how performance is maintained over time.

1. Data Engineering and Data Foundation

Every AI system starts with data. Without high-quality data, even the most advanced model will fail.

In production systems, data is treated as a continuous pipeline, not a one-time input. It is constantly collected, cleaned, and processed.

Key elements include:

- Structured and unstructured data handling

- Real-time data streaming and batch processing

- Data cleaning and normalization

- Feature engineering for better model performance

Poor data quality leads to biased or inaccurate predictions. That is why strong data engineering is the backbone of every AI system.

2. Model Development, Training, and Optimization

After data preparation, the next step is building and training the model.

This stage involves selecting the right algorithm and optimizing it for real-world performance.

Important processes include:

- Choosing machine learning or deep learning models

- Splitting datasets into training and testing sets

- Hyperparameter tuning

- Avoiding overfitting and underfitting

- Evaluating performance using metrics like accuracy, precision, recall, and F1-score

In production, models must perform well not just in testing but also in real-world unpredictable environments.

3. Scalable Deployment and System Architecture

A trained model is only useful when it can serve users efficiently at scale.

Production AI systems are designed for high availability and low latency.

Key components include:

- REST or gRPC APIs for model access

- Cloud infrastructure (AWS, Azure, GCP)

- Containerization using Docker

- Microservices architecture

- Load balancing for high traffic handling

The goal is to ensure the AI system responds quickly and reliably under heavy usage.

4. Monitoring, Logging, and Observability

Once deployed, AI systems must be continuously monitored to ensure they are working correctly.

Over time, models may degrade due to changing data patterns, known as model drift.

Monitoring includes:

- Tracking accuracy and performance metrics

- Detecting anomalies in input data

- Logging system behavior and errors

- Monitoring latency and response time

- Identifying bias or unexpected outputs

Without proper monitoring, systems can fail silently without being detected.

5. Continuous Learning and Feedback Loops

AI systems must evolve over time to stay relevant.

This is achieved through feedback loops that allow models to learn from new data and user behavior.

Examples include:

- User clicks and engagement data

- Feedback-based improvements

- Periodic model retraining

- Reinforcement learning systems

However, feedback must be carefully managed to avoid reinforcing incorrect patterns.

6. Ethics, Governance, and Responsible AI

Modern AI systems must be built responsibly, with fairness and transparency in mind.

Key considerations include:

- Bias detection and mitigation

- Data privacy protection

- Model explainability

- Regulatory compliance

- Ethical decision-making

Responsible AI ensures systems are not only powerful but also safe and trustworthy for users.

Conclusion

Production-grade AI systems are built on more than just algorithms. They require a full ecosystem of data pipelines, scalable infrastructure, continuous monitoring, and ethical governance.

These six core concepts define how real-world AI systems operate at scale and ensure they remain reliable, adaptive, and responsible.

If you want, I can also:

- Convert this into SEO blog format (title + meta + slug)

- Add featured image prompt + ALT text

- Or design it as a WordPress-ready post

Here is a long, detailed, plagiarism-free article with proper H3 headings as requested:

6 Core AI Concepts Behind Every Production-Grade AI System

Artificial Intelligence has moved far beyond experiments and research labs. Today, it powers critical systems such as fraud detection in banking, recommendation engines in social media, autonomous assistants, healthcare diagnostics, and enterprise automation tools.

But building an AI model is not enough. What makes a system truly “production-grade” is the ability to perform reliably, scale efficiently, and adapt continuously in real-world conditions.

Below are the six essential concepts that form the backbone of every production-level AI system.

1. Data Engineering and Data Foundation

Data is the foundation of every AI system. Without proper data engineering, even the most advanced model will fail in production.

In real-world systems, data is not static. It is continuously generated, processed, and refined through automated pipelines.

A strong data foundation includes:

- Real-time data ingestion from multiple sources

- Batch processing for historical data analysis

- Data cleaning to remove noise and inconsistencies

- Handling missing, duplicate, or corrupted records

- Feature engineering to improve model learning quality

- Secure data storage and governance

One of the biggest challenges in production AI is maintaining data consistency at scale. As data flows from different systems, ensuring accuracy and synchronization becomes critical.

High-quality data directly impacts prediction accuracy, fairness, and system reliability.

2. Model Development, Training, and Optimization

Once data is prepared, the next step is building and training AI models. This stage determines how well the system will perform in real-world conditions.

Model development involves selecting algorithms that best suit the problem. These could include machine learning models like decision trees or advanced deep learning architectures like transformers and neural networks.

Key processes include:

- Selecting the appropriate model architecture

- Splitting datasets into training, validation, and testing sets

- Hyperparameter tuning for better performance

- Preventing overfitting and underfitting issues

- Evaluating model performance using metrics such as accuracy, precision, recall, and F1-score

- Cross-validation for robustness

In production environments, models must not only perform well on test data but also generalize effectively to unseen real-world data. This is often more challenging than initial training.

3. Scalable Deployment and System Architecture

After training, the model must be deployed into a production environment where it can serve real users.

Deployment is not just about running a model—it is about building a scalable system that can handle high traffic and deliver fast responses.

Key components of production deployment include:

- REST or gRPC APIs for model access

- Cloud platforms like AWS, Azure, or Google Cloud

- Containerization using Docker and Kubernetes

- Microservices-based architecture for flexibility

- Load balancing for handling large-scale traffic

- Edge computing for low-latency applications

In real-world systems, latency is critical. Even milliseconds of delay can affect user experience in applications like search engines or financial trading systems.

Therefore, production AI systems are optimized for both speed and scalability.

4. Monitoring, Logging, and Observability

Once deployed, an AI system enters its most critical phase: real-world operation.

Unlike traditional software, AI systems can degrade over time due to changes in data distribution. This is known as model drift.

To ensure long-term reliability, continuous monitoring is essential.

Monitoring includes:

- Tracking model accuracy over time

- Detecting anomalies in input data

- Monitoring system latency and performance

- Logging all predictions and system events

- Identifying bias or unexpected behavior

- Alerting when performance drops below thresholds

Observability tools provide deep insights into how the system behaves internally.

Without proper monitoring, an AI system may continue running while silently producing incorrect or biased outputs.

5. Continuous Learning and Feedback Loops

AI systems must evolve over time to remain useful. This is where continuous learning comes into play.

A production AI system learns from real-world feedback and adapts its behavior accordingly.

Common feedback sources include:

- User interactions (clicks, likes, purchases)

- Human feedback and corrections

- System-generated logs

- Environmental changes in data patterns

Continuous learning approaches include:

- Periodic retraining of models

- Online learning (real-time updates)

- Reinforcement learning systems

- Human-in-the-loop training

However, feedback loops must be carefully designed. Poorly structured feedback can reinforce incorrect behavior or introduce bias into the system.

The goal is to ensure the AI system improves safely and consistently over time.

6. Ethics, Governance, and Responsible AI

As AI becomes more powerful, ethical considerations are no longer optional—they are mandatory.

Production-grade AI systems must ensure fairness, transparency, and accountability.

Key ethical principles include:

- Bias detection and mitigation in datasets and models

- Data privacy protection and compliance with regulations

- Transparency in AI decision-making

- Explainability of model predictions

- Security against malicious attacks or misuse

- Responsible data usage policies

For example, an AI hiring system must ensure it does not discriminate based on gender, race, or background. Similarly, financial AI systems must provide fair and explainable credit decisions.

Governance frameworks ensure that AI systems operate within legal and ethical boundaries while maintaining user trust.

Conclusion

Building a production-grade AI system is a complex engineering challenge that goes far beyond training models.

It requires a complete ecosystem involving:

- Strong data engineering

- Well-optimized models

- Scalable deployment infrastructure

- Continuous monitoring systems

- Adaptive learning mechanisms

- Ethical governance frameworks

These six core concepts form the foundation of every successful AI system in the real world.

As AI continues to evolve, the systems that succeed will not just be the most intelligent—but the most reliable, scalable, and responsible.