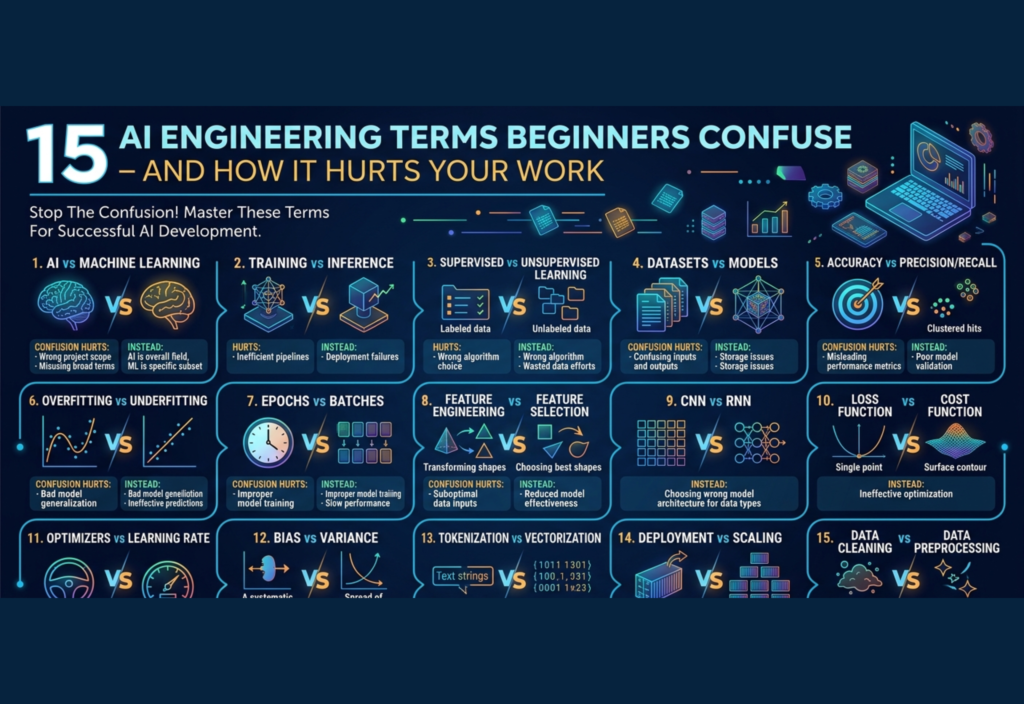

AI engineering terms confusion is one of the biggest challenges beginners face when entering the world of Artificial Intelligence. While AI is no longer a futuristic concept and is now shaping industries, businesses, and everyday applications, many newcomers struggle with understanding the core terminology correctly.

At first glance, many AI terms sound similar, which increases AI engineering terms confusion even more. These terms are often used interchangeably in casual discussions, but in real-world engineering, small misunderstandings can lead to serious problems—ranging from inefficient models to completely broken systems.

In this guide, we’ll break down 15 common examples of AI engineering terms confusion, and explain how these mistakes can silently damage your work, performance, and results.

3. Algorithm vs Model

An algorithm is a mathematical procedure or method used to learn patterns from data. A model is the output of that process after training.

For example, linear regression is an algorithm; the trained equation you get is the model.

Why it hurts your work:

If you don’t understand the difference, debugging becomes difficult. You won’t know whether the issue lies in the algorithm choice or the trained model’s performance.

4. Training vs Inference

Training is the process of teaching a model using data. Inference is when the trained model makes predictions on new data.

Many beginners focus only on training and ignore inference.

Why it hurts your work:

In real-world applications, inference speed matters more than training time. Confusing the two can result in slow, inefficient systems that fail in production.

5. Dataset vs Data Pipeline

A dataset is a collection of data used for training or evaluation. A data pipeline is the system that collects, processes, cleans, and feeds data into your model.

Beginners often think having a dataset is enough.

Why it hurts your work:

Without a proper pipeline, your data becomes inconsistent and unreliable. This leads to poor model performance and hard-to-reproduce results.

6. Accuracy vs Precision vs Recall

Accuracy measures overall correctness, but precision and recall provide deeper insights, especially in imbalanced datasets.

- Precision: How many predicted positives are correct

- Recall: How many actual positives are captured

Why it hurts your work:

Relying only on accuracy can mislead you. For example, a fraud detection system might show 99% accuracy but still fail to detect actual fraud cases.

7. Overfitting vs Underfitting

Overfitting happens when a model memorizes the training data but fails on new data. Underfitting occurs when the model fails to learn meaningful patterns.

Why it hurts your work:

Both lead to poor generalization. Beginners often celebrate high training accuracy without realizing their model is overfitting and useless in real-world scenarios.

8. Feature vs Label

Features are input variables used to make predictions. Labels are the outputs the model is trying to predict.

Why it hurts your work:

Mixing them up can completely break your dataset. Your model might learn incorrect relationships or fail entirely during training.

9. Supervised vs Unsupervised Learning

Supervised learning uses labeled data, while unsupervised learning identifies patterns without labels.

Beginners often assume labeled data is always necessary.

Why it hurts your work:

You may waste time labeling large datasets when an unsupervised approach could have provided useful insights faster.

10. Tokenization vs Embedding

In natural language processing:

- Tokenization breaks text into smaller units (words, subwords)

- Embedding converts those units into numerical vectors

Why it hurts your work:

Skipping proper tokenization or misunderstanding embeddings leads to poor text representation and weak model performance.

11. Parameters vs Hyperparameters

Parameters are learned during training (e.g., weights in a neural network). Hyperparameters are set before training (e.g., learning rate, batch size).

Why it hurts your work:

If you don’t tune hyperparameters properly, your model may never reach optimal performance—even if your data and algorithm are correct.

12. Batch Size vs Epoch

- Batch size: Number of samples processed before updating the model

- Epoch: One full pass through the entire dataset

Why it hurts your work:

Improper settings can slow down training or cause unstable learning. Beginners often choose random values without understanding their impact.

13. Latency vs Throughput

Latency refers to how fast a system responds to a single request. Throughput measures how many requests a system can handle over time.

Why it hurts your work:

Optimizing only for throughput might increase latency, making your application feel slow to users.

14. Fine-tuning vs Training from Scratch

Fine-tuning involves adjusting a pre-trained model for a specific task. Training from scratch means building a model entirely from the ground up.

Why it hurts your work:

Training from scratch when a pre-trained model exists wastes time, energy, and computational cost.

15. Prompt Engineering vs Model Engineering

Prompt engineering focuses on crafting inputs to get better outputs from AI systems. Model engineering involves building and optimizing models themselves.

Why it hurts your work:

If you rely only on prompts, your system may lack consistency, scalability, and control.

The Bigger Problem: Why These Confusions Matter

At a surface level, these mistakes may seem minor. But in real-world AI engineering, they compound quickly.

Misunderstanding basic terms leads to:

- Poor architectural decisions

- Inefficient use of resources

- Higher operational costs

- Difficulty in debugging and scaling systems

In professional environments, these mistakes don’t just affect your learning—they affect product quality, team performance, and business outcomes.

How to Avoid These Mistakes

Improving your understanding doesn’t require memorizing definitions—it requires practical clarity.

Focus on:

- Building small projects to apply concepts

- Comparing similar terms side by side

- Asking “when should I use this?” instead of just “what is this?”

- Reviewing mistakes and understanding why they happened

Final Thoughts

AI engineering is not just about using powerful tools—it’s about understanding them correctly. The difference between a beginner and a skilled engineer often comes down to clarity in fundamentals.

When you truly understand these terms, you:

- Build better systems

- Work more efficiently

- Debug faster

- Make smarter technical decisions

In the fast-moving world of AI, clarity is your biggest advantage.