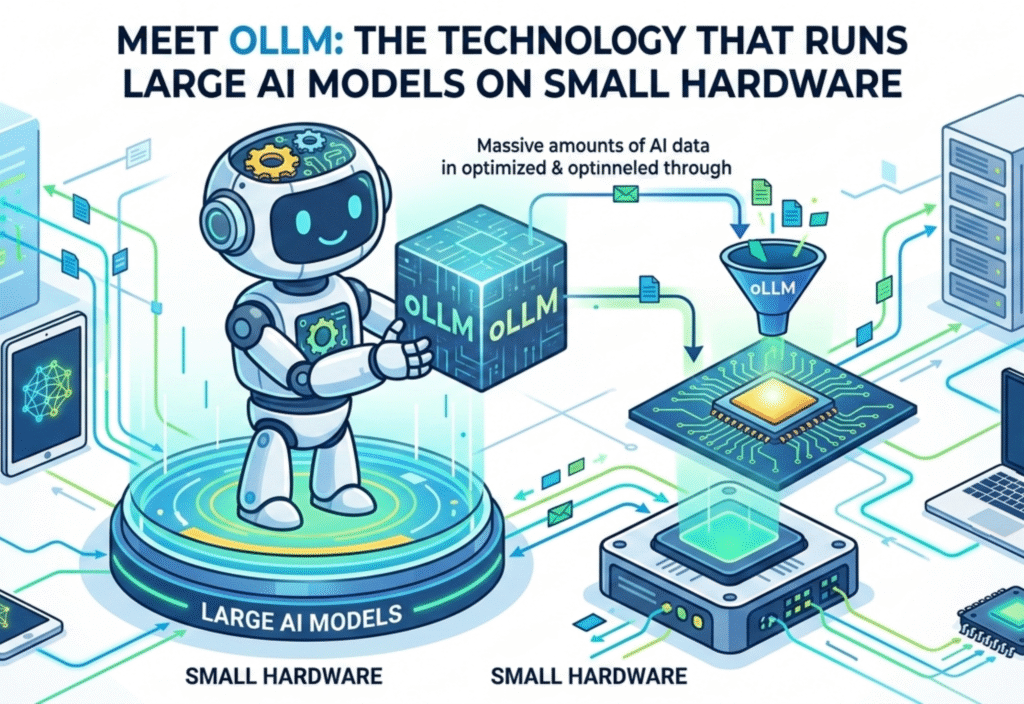

oLLM allows large AI models to run on small hardware, making it possible to execute high-performance AI on laptops, smartphones, and edge devices. Artificial intelligence has transformed the digital landscape, and with frameworks like oLLM, running massive LLMs locally is now practical…

This is where oLLM (Optimized Large Language Models) enters the scene, offering a revolutionary solution to run large AI models on modest hardware without compromising performance. It promises to democratize AI by making it accessible to anyone with a laptop, edge device, or even a smartphone.

Understanding oLLM

oLLM is not a single AI model but rather a framework and methodology designed to optimize existing large models. It enables these models to operate efficiently on low-resource devices by intelligently reducing memory footprint, optimizing computation, and improving inference speed.

The framework achieves this through three key strategies:

Quantization – Reduces the numerical precision of model weights from 32-bit floating point numbers to smaller formats like 8-bit or even 4-bit, drastically reducing memory usage while maintaining near-original accuracy.

Pruning – Removes redundant or less important neurons and connections in the neural network, making the model smaller and faster.

Memory-Efficient Operations – Optimizes the attention mechanism and other computationally heavy operations to minimize RAM consumption.

By combining these strategies, oLLM allows even massive models with tens of billions of parameters to run on devices with limited resources—something that previously required multiple high-end GPUs.

Why oLLM Matters

The importance of oLLM lies in its potential to redefine how AI is accessed and used. Traditionally, running large models required cloud access, which introduces latency, recurring costs, and potential privacy concerns. oLLM addresses these challenges by enabling local deployment.

1. Accessibility for Everyone

Developers, students, and small businesses can now experiment with advanced AI models without investing in costly infrastructure. This opens opportunities for innovation, research, and AI-based solutions in regions and organizations that were previously excluded from high-performance AI.

2. Faster Inference

By optimizing model size and computation, oLLM improves inference speed. Tasks like text generation, summarization, or code completion can now be executed in near real-time on standard laptops, enhancing productivity and user experience.

3. Privacy and Security

Local AI inference ensures that sensitive data never leaves the device. Industries like healthcare, finance, or government benefit from this capability as it prevents data breaches and ensures compliance with privacy regulations such as GDPR or HIPAA.

4. Cost and Energy Efficiency

Cloud-based AI is expensive and energy-intensive. Running large models locally using oLLM reduces the dependence on cloud infrastructure, saving both costs and energy. This approach aligns with sustainability goals and reduces the carbon footprint associated with large-scale AI computation.

How oLLM Works: A Technical Overview

oLLM relies on advanced techniques to compress and optimize large models without significantly affecting performance. Here’s a closer look at its core technologies:

Quantization

Traditional models store weights in 32-bit floating-point numbers, which consume enormous memory. oLLM converts these weights into lower-bit representations, often 8-bit or even 4-bit integers. Surprisingly, this compression has minimal impact on the model’s ability to generate accurate predictions, thanks to careful calibration techniques.

Pruning

Neural networks often contain redundant connections that contribute little to output accuracy. oLLM identifies and removes these unnecessary parts, resulting in smaller, faster models. There are two main types of pruning:

Structured Pruning: Entire layers or neurons are removed.

Unstructured Pruning: Individual weights are removed without affecting the overall architecture.

Memory-Efficient Attention

The attention mechanism in transformer models is the most memory-hungry part, especially in models with billions of parameters. oLLM uses optimized algorithms to compute attention only on relevant portions of input sequences, reducing memory usage while maintaining performance.

Dynamic Loading and Caching

oLLM does not load the entire model into memory at once. Instead, it loads parts of the model dynamically based on the task, and intelligently caches frequently used components. This allows devices with limited RAM to handle models that were previously impossible to run locally.

Real-World Applications of oLLM

The ability to run large AI models on small hardware opens up a wide range of applications:

1. Chatbots and Virtual Assistants

AI-powered chatbots can now run locally, providing instant responses without relying on cloud servers. This is especially valuable in customer service, education, and personal assistant applications.

2. Content Creation

Writers, marketers, and content creators can generate articles, summaries, or scripts directly on a laptop, bypassing cloud-based AI tools. This reduces latency and dependency on internet connectivity.

3. Translation and Language Services

oLLM allows offline translation and multi-lingual support on edge devices. Travelers, journalists, and global organizations can benefit from AI-driven language solutions without needing constant internet access.

4. AI Research and Development

Researchers and developers can experiment with large models without needing access to costly data centers or high-end GPUs, accelerating innovation in AI.

5. Privacy-Sensitive Applications

Healthcare and finance applications can process sensitive data locally, ensuring compliance with privacy regulations and reducing the risk of data leaks.

Performance Benchmarks

While exact benchmarks vary depending on the specific model and hardware, early tests show promising results:

A 13-billion parameter model can run on a laptop with 16GB RAM with near-real-time inference.

Quantized and pruned models can achieve up to 4x faster execution compared to full-size models on the same hardware.

Memory usage can be reduced by 50–70%, enabling larger models to fit into smaller devices.

These improvements demonstrate that oLLM makes large AI models not just feasible but practical for everyday users.

The Future of AI on Small Devices

oLLM is a significant step toward democratizing AI, making it accessible to everyone, from students and hobbyists to small enterprises. As the technology evolves, we can expect even more efficient compression techniques, faster inference algorithms, and broader support for different hardware architectures.

The implications of oLLM are profound:

Ubiquitous AI: Advanced models could run on smartphones, IoT devices, and laptops, bringing AI to the fingertips of millions.

Enhanced Privacy: Users gain full control over their data without relying on cloud-based services.

Sustainable AI: Reduced energy consumption aligns AI deployment with environmental goals.

Conclusion

oLLM is transforming the AI landscape by bridging the gap between large-scale models and accessible hardware. Its combination of quantization, pruning, memory-efficient operations, and dynamic loading allows high-performance models to run on modest devices, opening new possibilities for research, development, and everyday AI applications.