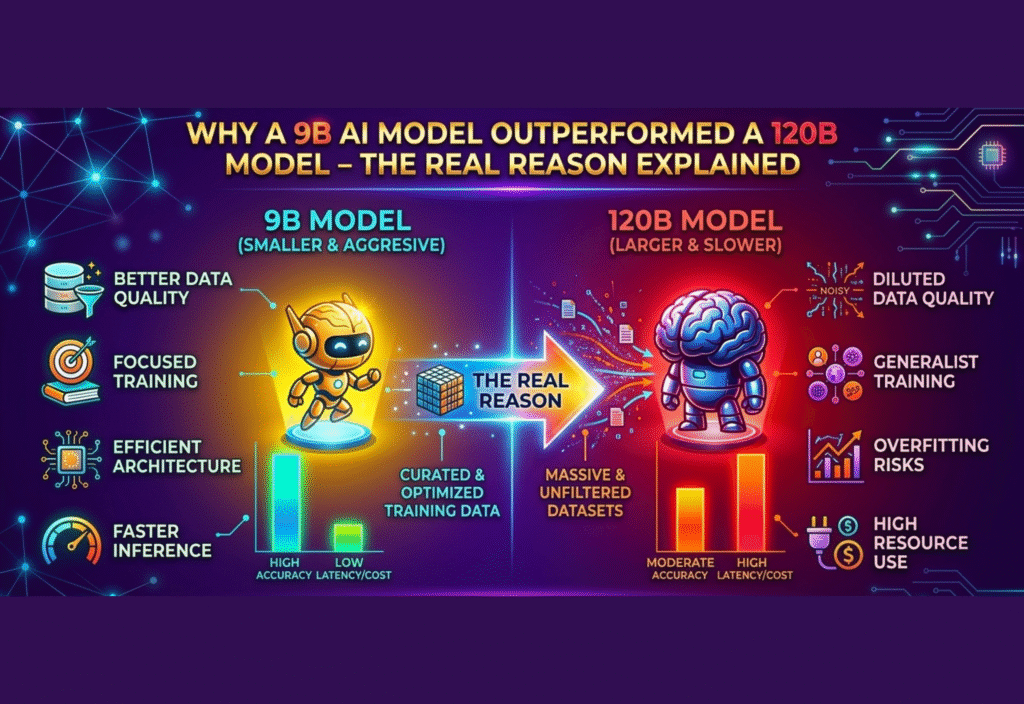

Artificial intelligence models are often judged by their size, but that assumption is changing. In the 9B vs 120B AI Model debate, researchers discovered that a smaller model sometimes performs better than a much larger one. This surprising result has sparked new discussions in the AI community about efficiency, architecture design, and training quality.

In several benchmarks and real-world tasks, smaller models have surprisingly matched or even outperformed significantly larger models. This has raised an important question among researchers, developers, and businesses: Why can a 9B AI model sometimes outperform a 120B model?

The answer lies in several key factors such as model architecture, training data quality, optimization techniques, efficiency, and task specialization. Understanding these factors helps explain why AI performance is not simply determined by model size.

Understanding AI Model Parameters

Before exploring the reasons behind this phenomenon, it is important to understand what parameters are. In machine learning and deep learning models, parameters are numerical values that help the model learn patterns from training data. These parameters are adjusted during training so that the model can predict or generate accurate outputs.

A model with more parameters has a larger capacity to learn complex relationships in data. This is why large models are often expected to perform better. However, this increased capacity also comes with challenges such as higher computational costs, longer training times, and a greater risk of inefficiencies.

In practice, the effectiveness of a model depends not only on the number of parameters but also on how well those parameters are used.

Efficient Model Architecture

One of the most important reasons a smaller model can outperform a larger one is better architecture design. Model architecture determines how information flows through the neural network, how layers interact with each other, and how efficiently the model processes data.

Over the past few years, AI researchers have introduced new architectural improvements that allow models to perform more efficiently. These improvements include better attention mechanisms, optimized transformer structures, and improved memory usage.

A well-designed 9B model that uses modern architecture may process information more efficiently than an older 120B model built with less optimized structures. In this situation, the smaller model can achieve higher accuracy despite having fewer parameters.

The Role of High-Quality Training Data

Another critical factor that influences model performance is training data quality. Large language models learn from massive datasets that include text, code, and other forms of information. If the dataset contains noise, incorrect information, or irrelevant content, the model may learn inaccurate patterns.

A smaller AI model trained on carefully curated, high-quality datasets may perform better than a larger model trained on large but unfiltered data collections. Researchers now recognize that high-quality data can sometimes be more valuable than simply increasing the amount of data.

Because of this shift, many AI developers focus on improving dataset filtering, removing low-quality data, and prioritizing reliable information sources during training.

Advanced Training Techniques

Modern AI training techniques have also played a significant role in improving the performance of smaller models. Methods such as instruction tuning, reinforcement learning from human feedback, and improved optimization algorithms help models learn more effectively.

Instruction tuning allows models to better understand human instructions and respond more accurately to prompts. Reinforcement learning techniques further refine model behavior by encouraging useful responses and discouraging incorrect ones.

When these advanced techniques are applied to a 9B model, the results can sometimes surpass those of a larger model that was trained using older or less efficient methods.

Task-Specific Optimization

Another reason smaller models may outperform larger ones is task specialization. Many large AI models are designed to perform a wide variety of tasks, from answering questions to generating code and translating languages.

While this versatility is useful, it can also dilute performance for specific tasks. A smaller model that is optimized for a particular task—such as coding, reasoning, summarization, or data analysis—may outperform a general-purpose model in that domain.

For example, a 9B model specifically optimized for programming tasks might generate better code than a 120B model that was trained to handle many different tasks at once.

Faster Inference and Real-Time Performance

In real-world applications, performance is not only measured by accuracy. Speed and efficiency are also extremely important. Smaller models generally require fewer computational resources, which allows them to process information faster.

This faster inference time is valuable for applications such as:

Real-time chat assistants

Mobile AI applications

Edge computing devices

Customer service automation

Business workflow tools

Because a 9B model uses fewer resources, it may deliver responses faster and operate more efficiently than a much larger model. In many cases, this efficiency can make smaller models more practical for production environments.

Reduced Risk of Overfitting

Large models with extremely high parameter counts can sometimes suffer from overfitting. Overfitting occurs when a model memorizes the training data instead of learning general patterns that apply to new data.

When this happens, the model may perform well during training but struggle when faced with real-world inputs that differ from the training dataset.

Smaller models with better regularization techniques often generalize more effectively. This means they can perform more consistently when dealing with new and unseen information.

Model Compression and Distillation

Recent advances in AI have introduced powerful techniques such as model distillation, pruning, and quantization. These techniques allow developers to compress large models into smaller versions while preserving most of their capabilities.

Model distillation, for example, involves training a smaller model to learn from a larger one. The smaller model absorbs the knowledge of the larger model but removes unnecessary complexity.

As a result, the optimized smaller model may perform just as well—or sometimes even better—than the original larger model.

Lower Costs and Better Accessibility

One of the biggest advantages of smaller AI models is cost efficiency. Training and operating very large models requires enormous computing power, specialized hardware, and high energy consumption.

Smaller models are much easier to deploy and maintain. They can run on less powerful hardware, making them accessible to startups, researchers, and smaller companies.

This accessibility allows more organizations to experiment with AI technology without needing massive infrastructure.

The Industry Shift Toward Efficient AI

The idea that “bigger is always better” is slowly changing in the AI industry. While extremely large models still play an important role in research and development, many companies are now focusing on efficient AI systems rather than simply increasing model size.

Developers are working on:

More efficient neural network architectures

Better training pipelines

Improved dataset quality

Hybrid AI models

Specialized task-focused models

This shift is helping create AI systems that are both powerful and practical.

The Future of AI Model Development

Looking ahead, the future of artificial intelligence will likely involve a balance between model size and efficiency. Instead of focusing only on building massive models, researchers will continue exploring smarter approaches to AI design.

We may see more modular AI systems, where multiple specialized models work together to solve complex problems. These systems could combine the strengths of both large and small models.

Additionally, advances in hardware acceleration, distributed computing, and AI optimization techniques will further improve model efficiency.

Conclusion

The case of a 9B AI model outperforming a 120B model highlights an important lesson in artificial intelligence development. Model size alone does not determine performance. Factors such as architecture design, training data quality, optimization methods, and task specialization play equally important roles.

Smaller models that are carefully designed and well-trained can sometimes deliver better results than much larger systems. This realization is encouraging developers to focus on smarter AI design rather than simply building bigger models.