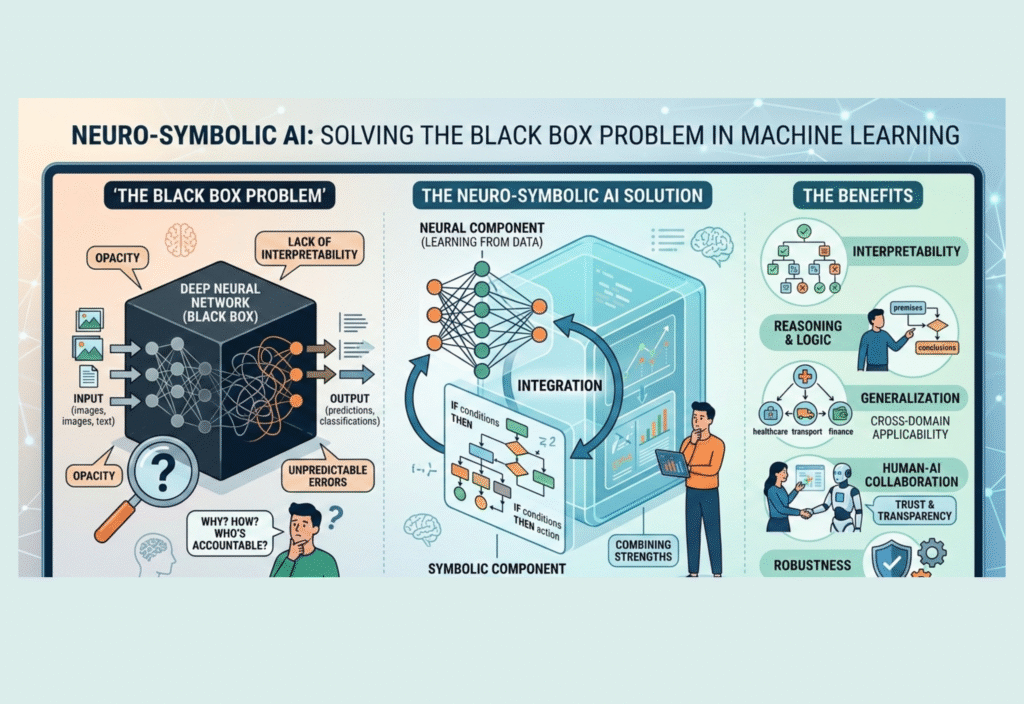

Artificial Intelligence has made enormous progress over the past decade. Technologies such as deep learning have enabled computers to recognize images, translate languages, and even generate human-like text. Many systems built with neural networks now outperform humans in certain specialized tasks. However, despite their impressive performance, these models often suffer from one critical limitation: they behave like a black box.

A black-box model produces results, but it does not clearly explain how it reached those conclusions. In sensitive areas such as healthcare, finance, law, and scientific research, this lack of transparency can create serious problems. If an AI system recommends a medical diagnosis or denies a loan application, people need to understand the reasoning behind that decision.

To address this challenge, researchers are turning to Neuro-Symbolic AI, a powerful approach that combines neural networks with symbolic reasoning. This hybrid model aims to make artificial intelligence both powerful and understandable.

Understanding the Black Box Problem

Most modern AI systems rely on deep neural networks. These networks learn patterns from massive datasets by adjusting millions—or even billions—of parameters. While they are excellent at recognizing complex patterns, the internal decision process of these models is difficult for humans to interpret.

This lack of transparency creates several issues:

1. Lack of Trust

Users and organizations may hesitate to rely on AI decisions when they cannot understand the reasoning behind them.

2. Difficult Debugging

When an AI system makes a mistake, developers often struggle to determine why it happened.

3. Ethical and Legal Concerns

In regulated industries, organizations must explain automated decisions to ensure fairness and accountability.

4. Limited Generalization

Pure neural systems often struggle with logical reasoning and structured knowledge.

Because of these limitations, the demand for more interpretable AI systems continues to grow.

What Is Neuro-Symbolic AI?

Neuro-Symbolic AI combines two different approaches to artificial intelligence:

Neural Networks

These systems learn patterns from data. They excel at perception tasks such as image recognition, speech processing, and natural language understanding.

Symbolic AI

Symbolic systems use logic, rules, and knowledge graphs to perform reasoning. They are transparent, structured, and easier to interpret.

Neuro-Symbolic AI merges these strengths into a single framework. Neural networks handle complex pattern recognition, while symbolic reasoning systems provide logical explanations and structured decision-making.

This integration helps build AI systems that are both intelligent and explainable.

How Neuro-Symbolic Systems Work

A neuro-symbolic architecture typically operates in several stages.

First, neural networks analyze raw data such as images, text, or audio. They extract patterns and convert them into meaningful representations.

Next, the symbolic reasoning layer interprets these patterns using predefined rules, knowledge graphs, or logical frameworks.

Finally, the system produces an output that includes both a decision and a reasoning path. This reasoning makes it easier for humans to understand how the AI reached its conclusion.

For example, in a medical diagnostic system:

A neural network analyzes medical images.

The symbolic layer compares findings with medical knowledge rules.

The system provides a diagnosis along with logical explanations.

This combination significantly improves transparency.

Key Advantages of Neuro-Symbolic AI

1. Explainable Decisions

One of the biggest benefits is improved explainability. Symbolic reasoning allows AI systems to provide clear logical steps behind their decisions, which increases trust among users.

2. Better Reasoning Capabilities

Neural networks are powerful at recognizing patterns, but they struggle with logic. Symbolic systems, on the other hand, are excellent at reasoning. Combining them allows AI to handle both tasks effectively.

3. Improved Data Efficiency

Neural networks often require enormous datasets for training. Symbolic reasoning can incorporate existing knowledge, reducing the need for massive amounts of data.

4. Enhanced Reliability

Neuro-symbolic models can verify decisions using logical constraints. This reduces errors and makes systems safer for critical applications.

5. Greater Transparency and Accountability

Explainable AI systems are easier to audit, regulate, and improve. This is particularly important in industries with strict regulatory requirements.

Real-World Applications

Neuro-Symbolic AI is already being explored across multiple industries.

Healthcare

Doctors can use AI tools that not only detect diseases but also explain the reasoning behind their diagnoses. This increases trust and improves collaboration between humans and machines.

Finance

Financial institutions can apply neuro-symbolic models to detect fraud while providing clear explanations for flagged transactions.

Autonomous Systems

Self-driving vehicles must make decisions in complex environments. Combining neural perception with symbolic reasoning can help vehicles understand traffic rules and explain their actions.

Scientific Research

Researchers use neuro-symbolic systems to analyze complex data while applying scientific knowledge rules.

Enterprise Automation

Businesses can build intelligent automation tools that follow logical policies while learning from real-world data.

Challenges and Limitations

Although promising, Neuro-Symbolic AI still faces several challenges.

System Complexity

Combining neural networks with symbolic reasoning can create complex architectures that require careful design.

Integration Difficulties

Aligning data-driven learning with rule-based logic is technically challenging.

Computational Requirements

Some neuro-symbolic models demand significant computing resources.

Despite these challenges, ongoing research continues to improve hybrid AI systems.

The Future of Explainable AI

The future of artificial intelligence will likely involve systems that are not only powerful but also understandable. As AI becomes more integrated into everyday decision-making, explainability will become a core requirement rather than an optional feature.

Neuro-Symbolic AI represents a major step toward building trustworthy artificial intelligence. By combining the strengths of neural learning and symbolic reasoning, researchers are creating systems that can both learn from data and explain their decisions.

This hybrid approach may play a crucial role in solving one of the most important challenges in modern machine learning: turning black-box models into transparent and accountable AI systems.