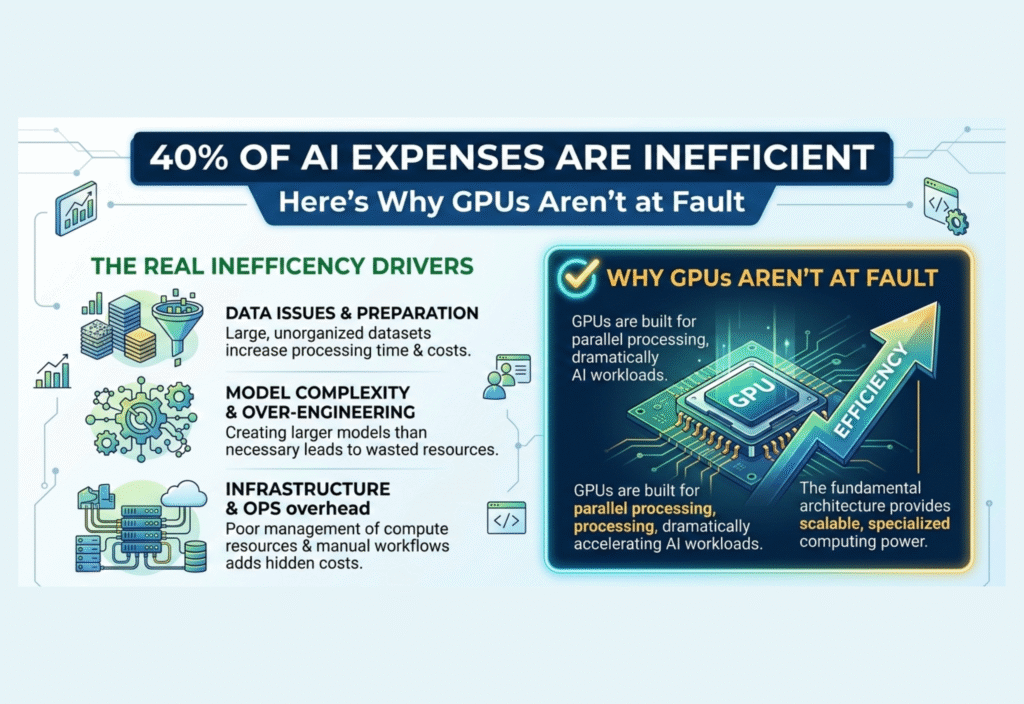

AI expenses inefficiency is a growing concern for organizations investing in artificial intelligence. Studies show that nearly 40% of AI spending is wasted due to workflow mismanagement, underutilized infrastructure, and unoptimized data pipelines — yet GPUs themselves are rarely at fault. Companies across industries must address these inefficiencies to maximize ROI on AI investments.

Many organizations initially blame GPUs for this inefficiency, assuming the expensive hardware is underperforming. Yet, in reality, GPUs are rarely the root cause. Instead, inefficiencies stem from workflow mismanagement, poorly optimized models, underutilized infrastructure, and unbalanced data pipelines.

Understanding the true sources of AI inefficiency is critical for businesses aiming to optimize costs, scale efficiently, and fully leverage their investments in AI technology.

The Growing Cost of AI Infrastructure

Over the past decade, AI has become a central component of business operations. Companies use AI for:

Predictive analytics – forecasting sales, customer behavior, or system failures

Natural language processing – chatbots, virtual assistants, and sentiment analysis

Computer vision – image recognition, medical imaging, and autonomous navigation

Recommendation engines – personalized content and product recommendations

To support these tasks, businesses rely on high-performance GPUs like NVIDIA A100 GPU or NVIDIA H100 GPU, cloud-based clusters from Google Cloud or Microsoft Azure, and specialized AI frameworks.

While these investments deliver exceptional computational power, organizations often mismanage workloads, resulting in massive underutilization and financial waste.

Why GPUs Are Not the Problem

GPUs are designed for parallel computation, making them ideal for AI model training and inference. When GPU usage appears low, it often points to process inefficiencies rather than hardware failure.

Common Misconceptions

Underutilized GPUs = GPU inefficiency

In most cases, the GPU is fully capable but idle due to delays elsewhere in the workflow.Expensive hardware = wasted money

High costs don’t automatically mean inefficiency. Waste arises when the hardware is not used optimally.Cloud GPU allocation equals full utilization

Reserving a cloud instance doesn’t guarantee efficiency; improper scheduling and workload management reduce effective use.

Major Sources of AI Expense Inefficiency

1. Poor Resource Scheduling

Many organizations allocate GPU clusters statically rather than dynamically. Teams often reserve multiple GPUs for experiments that don’t require full capacity. Meanwhile, other teams wait for resources, creating idle GPUs and wasted spending.

Solution: Implement dynamic GPU scheduling that allocates resources based on workload demands, maximizing utilization.

2. Inefficient Data Pipelines

AI models require large datasets for training. If data ingestion or preprocessing is slow, GPUs remain idle while waiting for input.

Solution: Optimize data pipelines, implement caching, and use high-throughput storage to ensure GPUs remain productive.

3. Over-Provisioning Infrastructure

Companies sometimes purchase excessive hardware to anticipate future growth. During early development, much of the infrastructure sits unused.

Solution: Adopt scalable infrastructure with cloud-based elasticity, adjusting resources as demand grows.

4. Suboptimal Model Design

AI models with unnecessarily high complexity consume more GPU cycles than needed. Overparameterized models increase training time and costs without improving performance.

Solution: Apply model optimization techniques like pruning, quantization, or knowledge distillation to reduce computational demand.

5. Lack of Monitoring and Analytics

Without monitoring tools, organizations cannot track GPU utilization effectively. Idle or misallocated resources remain unnoticed, contributing to inefficiency.

Solution: Deploy real-time monitoring dashboards to track usage, spot bottlenecks, and optimize resource allocation.

The Role of Cloud Infrastructure

Cloud providers offer flexibility and scalability but also create new challenges:

Idle instances: Forgetting to shut down unused GPUs results in ongoing charges.

Over-allocation: Reserving more GPUs than necessary wastes money.

Complex billing: Multi-region deployments can increase costs if not monitored.

Best Practice: Implement cloud management tools and automated shutdown policies to reduce waste.

Strategies to Reduce AI Expenses Inefficiency

Businesses can significantly cut wasted AI spending with the right strategies:

Dynamic Resource Scheduling – Allocate GPUs based on real-time workload demand.

Optimized Data Pipelines – Ensure continuous GPU feed with high-performance storage and preprocessing.

Model Optimization – Reduce resource requirements with pruning, quantization, or smaller architectures.

Monitoring Tools – Track GPU utilization to identify inefficiencies.

Team Training – Educate engineers in scalable, cost-efficient AI practices.

Cloud Cost Management – Automate instance shutdown and resource scaling.

Implementing these steps can transform underutilized infrastructure into a cost-efficient, high-performance AI environment.

Case Study: AI Infrastructure Efficiency

A leading e-commerce company recently analyzed its AI infrastructure:

Problem: 45% of GPUs appeared underutilized.

Initial assumption: GPUs were inefficient.

Analysis: Delays in data preprocessing and poor scheduling caused idle time.

Solution: Optimized pipelines, applied dynamic scheduling, and adjusted model architectures.

Result: GPU utilization increased by 70%, while AI infrastructure costs dropped by 35%.

This example highlights that hardware rarely causes inefficiency — human and software workflow mismanagement is usually the culprit.

Emerging Technologies to Improve Efficiency

AI Model Compression Tools – Reduce GPU cycles without sacrificing accuracy.

Hybrid Cloud Architectures – Combine on-premises and cloud resources to optimize costs.

Automated Scheduling Algorithms – AI-driven scheduling that allocates resources dynamically.

Simulation-Based Testing – Validate models without overusing production GPUs.

These technologies ensure AI teams can maximize ROI on expensive GPU resources.

The Future of AI Spending

As AI adoption grows, businesses must recognize that hardware costs alone don’t define efficiency. Organizations that focus on workflow optimization, data management, and intelligent scheduling will see the biggest reductions in wasted spending.

GPUs will continue to evolve, offering more performance per dollar. However, the real cost savings come from smarter usage rather than replacing hardware.

Conclusion

While headlines often suggest GPUs are to blame for inefficient AI spending, the truth is more nuanced. 40% of AI expenses are inefficient, but the majority of inefficiency comes from workflow, data, and infrastructure mismanagement rather than hardware limitations.

By optimizing resource scheduling, data pipelines, model architectures, and monitoring, organizations can drastically reduce wasted spending. GPUs themselves remain highly efficient and essential tools for AI workloads.

The lesson is clear: the challenge is not the GPU—it’s how organizations use it. Efficient AI infrastructure management, rather than hardware replacement, is the key to unlocking cost-effective and high-performance AI.