Artificial intelligence is transforming industries, economies, and everyday life. From smart assistants to advanced data analytics systems, AI is now deeply integrated into modern society. AI Boundaries are becoming increasingly important as governments, technology companies, researchers, and international organizations work to define the ethical and legal limits of artificial intelligence. As AI systems grow more powerful, clear governance frameworks are essential to ensure innovation remains safe, fair, and beneficial for humanity.

Understanding AI Boundaries in the Modern World

AI boundaries refer to the legal, ethical, and technical limits placed on artificial intelligence systems. These limits guide how AI technologies are developed, trained, deployed, and monitored. Without defined AI governance, artificial intelligence could create risks such as privacy violations, biased decision-making, misinformation, or security threats.

The purpose of setting AI boundaries is not to restrict progress. Instead, it is to create a structured environment where responsible AI development can thrive. Clear AI regulation helps balance technological advancement with human rights, safety, and transparency.

Why AI Governance Is Necessary

Artificial intelligence operates using complex algorithms and massive datasets. These systems can influence hiring decisions, loan approvals, medical diagnoses, and even national security strategies. Because AI impacts critical areas of life, accountability and oversight are necessary.

Key reasons why AI boundaries matter include:

Preventing algorithmic bias and discrimination

Protecting user data and privacy

Ensuring transparency in automated decisions

Reducing risks of misuse

Promoting ethical AI innovation

Without structured AI governance, technological power could outpace societal readiness. Establishing responsible AI frameworks ensures that artificial intelligence aligns with public interest.

The Role of Governments in Defining AI Boundaries

Governments play a central role in setting AI boundaries. Through legislation and regulatory policies, they define how artificial intelligence can be used within their jurisdictions. Data protection laws, cybersecurity regulations, and AI accountability standards are examples of legal frameworks shaping AI development.

Public institutions aim to ensure that:

AI systems are safe before public deployment

Companies follow transparency requirements

Personal data remains protected

High-risk AI applications undergo strict evaluation

Government oversight helps create trust in AI technologies while maintaining innovation.

Technology Companies and Corporate Responsibility

Major technology companies design and deploy many AI systems used globally. Because of their influence, these organizations hold significant responsibility in establishing AI boundaries internally.

Many tech firms implement:

Responsible AI principles

Ethical review committees

Bias detection and mitigation tools

AI transparency reports

Corporate AI governance frameworks often focus on fairness, accountability, and explainability. By investing in safe AI research, companies help strengthen global standards for artificial intelligence.

Researchers and Academic Institutions

Universities and AI research centers contribute by studying both the benefits and risks of artificial intelligence. Researchers examine long-term AI safety, algorithmic transparency, and human-AI interaction.

Academic contributions include:

Developing fairness metrics

Creating interpretable AI models

Studying AI alignment and safety

Advising policymakers on AI risks

Through research and innovation, experts help refine AI boundaries based on evidence and ethical principles.

International Cooperation and Global Standards

Artificial intelligence does not operate within national borders. AI systems developed in one country may impact users worldwide. For this reason, global collaboration is essential in defining AI boundaries.

International discussions focus on:

Shared ethical guidelines

Cross-border data standards

AI safety cooperation

Responsible AI innovation

Global cooperation ensures that artificial intelligence evolves in a coordinated and secure manner.

Ethical Principles Guiding AI Boundaries

Ethics plays a foundational role in shaping AI boundaries. Ethical AI development focuses on human-centered design and social responsibility.

Core ethical principles include:

Transparency: AI decisions should be explainable and understandable.

Fairness: Systems must avoid discrimination and bias.

Accountability: Developers must take responsibility for AI outcomes.

Privacy: Personal information must be safeguarded.

Security: AI systems should be protected against misuse or cyber threats.

These principles guide responsible AI innovation and strengthen public trust.

Challenges in Setting AI Boundaries

Defining AI boundaries is complex. Artificial intelligence evolves rapidly, often faster than legal systems can adapt. Emerging technologies like generative AI, autonomous systems, and predictive analytics create new governance challenges.

Key challenges include:

Rapid technological change

Differences in international regulation

Balancing innovation with oversight

Addressing cultural and ethical differences

Because AI development is dynamic, governance frameworks must remain flexible and adaptive.

The Future of AI Boundaries

The future of AI governance will likely involve deeper collaboration between governments, private sector leaders, researchers, and civil society. Continuous monitoring, updated regulations, and transparent reporting will become essential parts of AI development.

AI boundaries will evolve as new technologies emerge. Responsible AI innovation requires constant evaluation to ensure artificial intelligence remains aligned with societal values.

Conclusion

AI Boundaries are shaping the direction of artificial intelligence worldwide. Governments establish regulatory frameworks, technology companies implement responsible development practices, researchers provide critical insights, and international organizations encourage cooperation. Together, these stakeholders define the limits that ensure AI remains safe, ethical, and beneficial.

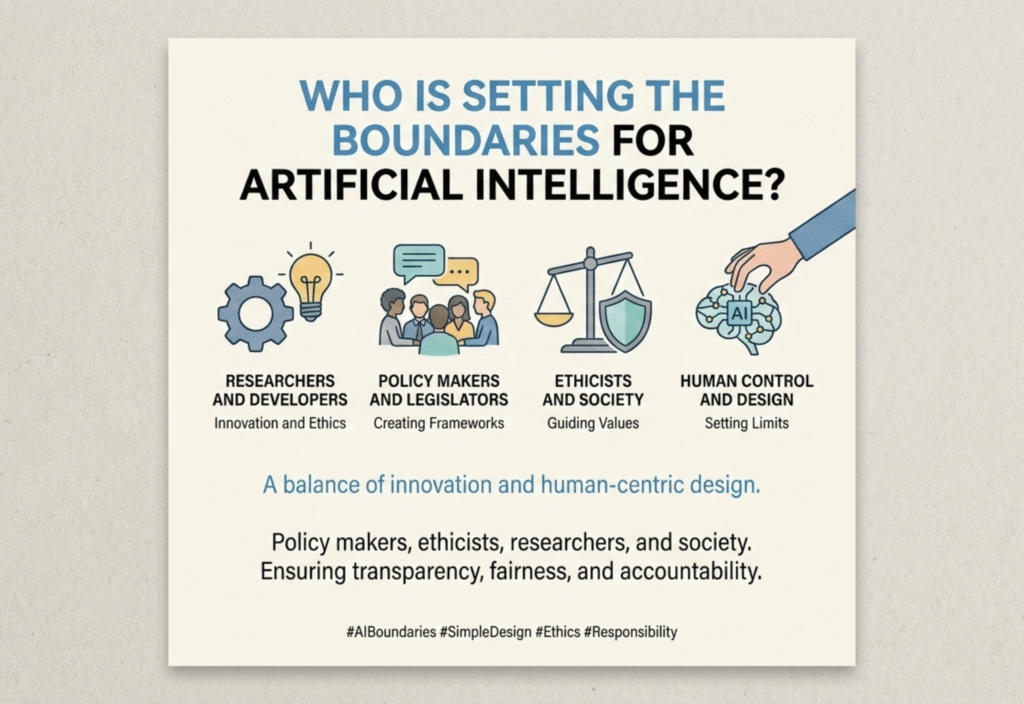

Artificial Intelligence (AI) is transforming the way people live, work, and communicate. From smart assistants and recommendation systems to advanced medical tools and self-driving technologies, AI is rapidly becoming a powerful force in modern society. However, as this technology grows more capable, an important question arises: Who is setting the boundaries for artificial intelligence?

AI has enormous potential to improve productivity, solve complex problems, and create new opportunities. At the same time, it also raises concerns about privacy, bias, safety, and ethical use. Because of these concerns, different groups around the world are working together to create rules, standards, and ethical guidelines that define how AI should be developed and used responsibly.

Why AI Boundaries Are Necessary

Artificial intelligence systems can process massive amounts of data and make decisions faster than humans. While this capability can lead to great innovation, it can also create risks if there are no clear boundaries.

Without proper guidelines, AI could potentially be used in ways that harm individuals or society. For example, poorly designed algorithms might produce biased results, invade privacy, or spread misinformation. This is why defining AI governance, ethical AI development, AI regulation, and responsible AI use has become an important topic globally.

Setting boundaries does not mean stopping innovation. Instead, it helps ensure that AI technologies are built in a way that benefits people while minimizing harm.

The Role of Governments in AI Regulation

Governments play one of the most important roles in setting boundaries for artificial intelligence. They create laws, regulations, and policies that guide how AI systems can be developed and deployed.

Many countries are introducing AI regulations to address issues such as data protection, transparency, and accountability. Governments aim to ensure that companies follow ethical standards and that AI technologies are safe for public use.

Policies often focus on areas such as:

Data privacy and protection

Algorithm transparency

Prevention of discrimination and bias

Security and safety standards

By creating clear legal frameworks, governments help shape how AI technologies evolve while protecting public interests.

Technology Companies and AI Development

Large technology companies are among the primary developers of artificial intelligence systems. Because they build the tools and platforms used by millions of people, they also carry a major responsibility in setting AI boundaries.

Many tech companies have introduced AI ethics guidelines, internal review boards, and responsible AI frameworks. These measures aim to ensure that AI systems are designed with fairness, transparency, and accountability in mind.

Companies also invest heavily in research to reduce bias in algorithms, improve explainability, and enhance AI safety. Responsible innovation within the tech industry plays a major role in shaping the future of AI.

Researchers and Academic Institutions

Universities and research institutions are key contributors to defining AI boundaries. Researchers study how AI systems behave, identify potential risks, and develop new techniques to make AI safer and more reliable.

Academic experts often focus on topics such as:

AI ethics and fairness

Algorithmic transparency

Human-AI collaboration

Long-term AI safety

Their work helps guide policymakers, technology companies, and international organizations in understanding the broader impact of artificial intelligence.

International Organizations and Global Cooperation

Artificial intelligence is a global technology that affects people across different countries. Because of this, international cooperation is essential in setting consistent AI standards.

Global organizations bring together governments, researchers, and technology leaders to discuss ethical AI development and create shared guidelines. These discussions help ensure that AI systems are developed responsibly across borders.

International cooperation also helps address challenges such as cybersecurity, data sharing, and global AI governance.

The Role of Ethics in AI Boundaries

Ethics plays a central role in defining the boundaries of artificial intelligence. Ethical AI focuses on ensuring that technology respects human rights, promotes fairness, and avoids harm.

Some key ethical principles guiding AI development include:

Transparency: AI systems should be understandable and explainable.

Fairness: Algorithms should avoid discrimination and bias.

Accountability: Developers and organizations should be responsible for AI decisions.

Privacy: Personal data must be protected.

These principles help guide the development of trustworthy AI systems that benefit society.

Public Awareness and Community Involvement

The public also plays an important role in shaping AI boundaries. As AI becomes more integrated into everyday life, people are becoming more aware of how technology affects their privacy, security, and opportunities.

Public discussions, education, and awareness campaigns encourage responsible AI development and ensure that technology aligns with human values. Feedback from users, communities, and civil society groups can influence policies and corporate practices.

Challenges in Defining AI Boundaries

Despite ongoing efforts, setting boundaries for artificial intelligence is not always simple. AI technology evolves rapidly, often faster than regulations and policies can keep up.

Some key challenges include:

Rapid technological advancement

Differences in international regulations

Balancing innovation with safety

Addressing ethical concerns across cultures

Because of these challenges, AI governance must remain flexible and adaptable as the technology continues to evolve.

The Future of AI Governance

The future of artificial intelligence will likely involve stronger collaboration between governments, technology companies, researchers, and international organizations. By working together, these groups can create balanced frameworks that support innovation while ensuring responsible use.

As AI systems become more advanced, continuous monitoring, ethical review, and policy updates will be necessary. The goal is not to limit progress but to guide it in a direction that benefits humanity.

Conclusion

Artificial intelligence is one of the most powerful technologies of the modern era, and its influence will continue to grow in the coming years. Because of its potential impact, defining clear boundaries for AI is essential.

Governments, technology companies, researchers, international organizations, and the public all play a role in shaping how AI develops. Through cooperation, ethical guidelines, and responsible innovation, society can ensure that artificial intelligence remains a tool that supports human progress while protecting fundamental values.