In 2026, the digital landscape has become increasingly complex. The rise of deepfakes and other forms of manipulated media poses significant challenges to digital trust. With AI-generated content becoming more realistic and widespread, individuals, organizations, and governments face the critical task of safeguarding truth and authenticity online.

Understanding Deepfakes

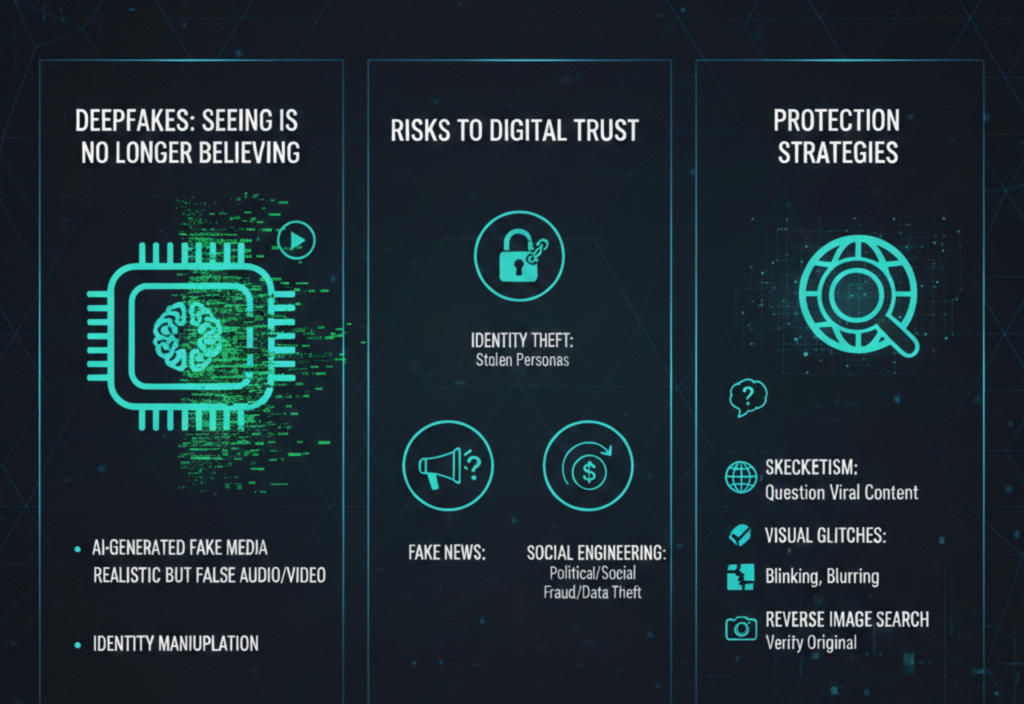

Deepfakes are AI-generated or AI-altered images, videos, or audio clips that make it appear as though someone said or did something they did not. These tools use deep learning techniques to create hyper-realistic content, which can be difficult to distinguish from real media.

While deepfakes have legitimate applications—such as in entertainment, film production, or digital marketing—they also raise concerns when misused to spread misinformation, impersonate individuals, or manipulate public opinion.

The Threat of Misinformation

Misinformation refers to the spread of false or misleading information, whether intentional or not. When combined with deepfakes, it can have serious consequences:

Political manipulation: False content can influence elections or public policies.

Financial fraud: Fake videos or audio can be used to scam individuals or organizations.

Social harm: Misleading media can incite panic, discrimination, or conflict.

The speed and reach of social media amplify these risks, making digital trust harder to maintain.

Why Digital Trust Matters

Digital trust is the confidence that online content is authentic, reliable, and credible. It is a cornerstone of:

News and journalism – Ensuring accurate reporting.

E-commerce and financial services – Protecting transactions and personal data.

Social interactions – Preserving reputation and accountability.

Without trust, users may doubt everything online, which can harm societies and economies.

Strategies to Protect Reality Online

1. Detection and Verification Tools

AI-powered solutions now exist to detect manipulated media. These tools analyze:

Inconsistencies in facial movements

Audio-video mismatches

Metadata anomalies

Organizations are integrating these systems to flag deepfakes before they spread widely.

2. Digital Literacy and Awareness

Education plays a crucial role in protecting digital trust. Users should:

Verify sources before sharing content

Cross-check news from multiple outlets

Learn how deepfakes are created and recognized

Empowering individuals with knowledge reduces the effectiveness of misinformation campaigns.

3. Transparent AI Practices

Tech companies can maintain trust by:

Clearly labeling AI-generated content

Providing context for manipulated media

Creating reporting mechanisms for suspicious content

Transparency ensures users can distinguish AI-generated media from authentic content.

4. Legal and Ethical Frameworks

Governments and organizations are introducing policies to regulate deepfakes:

Anti-impersonation laws

Restrictions on malicious media distribution

Ethical guidelines for AI content creation

These frameworks protect individuals and strengthen societal trust in digital environments.

The Role of Ethical AI

AI is both the cause and the solution in this landscape. While AI enables the creation of realistic deepfakes, it is also used to detect and mitigate manipulated content. Ethical AI development includes:

Prioritizing transparency

Avoiding harm or exploitation

Ensuring accountability in AI deployment

Responsible AI practices support digital trust and societal well-being.

The Future Outlook

By 2026 and beyond, the interplay of deepfakes, misinformation, and digital trust will shape how society interacts online. Key trends include:

Automated verification tools becoming standard in social media platforms

AI literacy education integrated into schools and workplaces

Cross-border collaborations to enforce legal and ethical standards

Public awareness campaigns to empower users to recognize fake media

The digital ecosystem will rely on both technology and human judgment to maintain trust.

Final Thoughts

Deepfakes and misinformation are not going away, but a combination of AI detection, education, transparency, and ethical governance can preserve reality online. Digital trust is fragile, but it can be strengthened with proactive strategies and responsible AI practices.